“To change something, build a new model that makes the existing model obsolete.”

Web3 Application Achitecture

Definition of Web3 has many aspects. It can be a new pattern for creating and using digital assets, or it can be the decentralization of network structure and power structure. The following discussion will focus on Web3 application architecture, and limited to the decentralized network structure aspect. I will assume the audiences have a basic understanding of how blockchain works, and are familiar with concepts such as “smart contract” or “wallet”.

Web2 vs Web3 architecture

The evolving Web3 stack is built upon our current technologies, and users still rely on web browsers to fetch and interact with web applications. Browsers are complicated and slow-evolving software, so much technological progress depends on working with or around the current ecosystem of browsers.

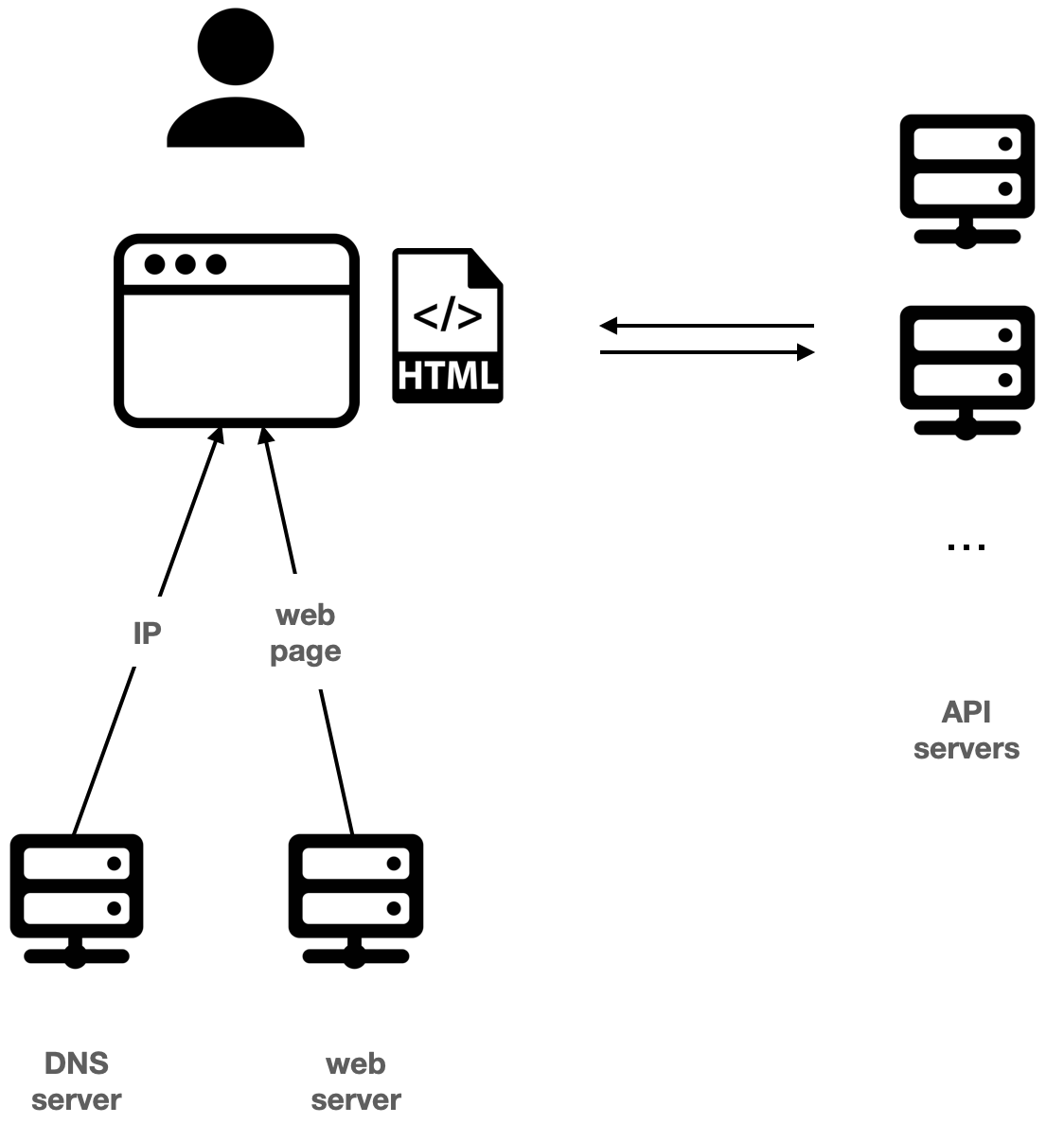

Typically in Web2, after the user input an address to the browser, for example, google.com, the browser fetches the IP of Google’s server from Domain Name System (DNS) servers, then fetches the web application from Google’s server, before finally rendering the web application for the user. To interact with the user, the web application then makes calls to Google’s server through the browser.

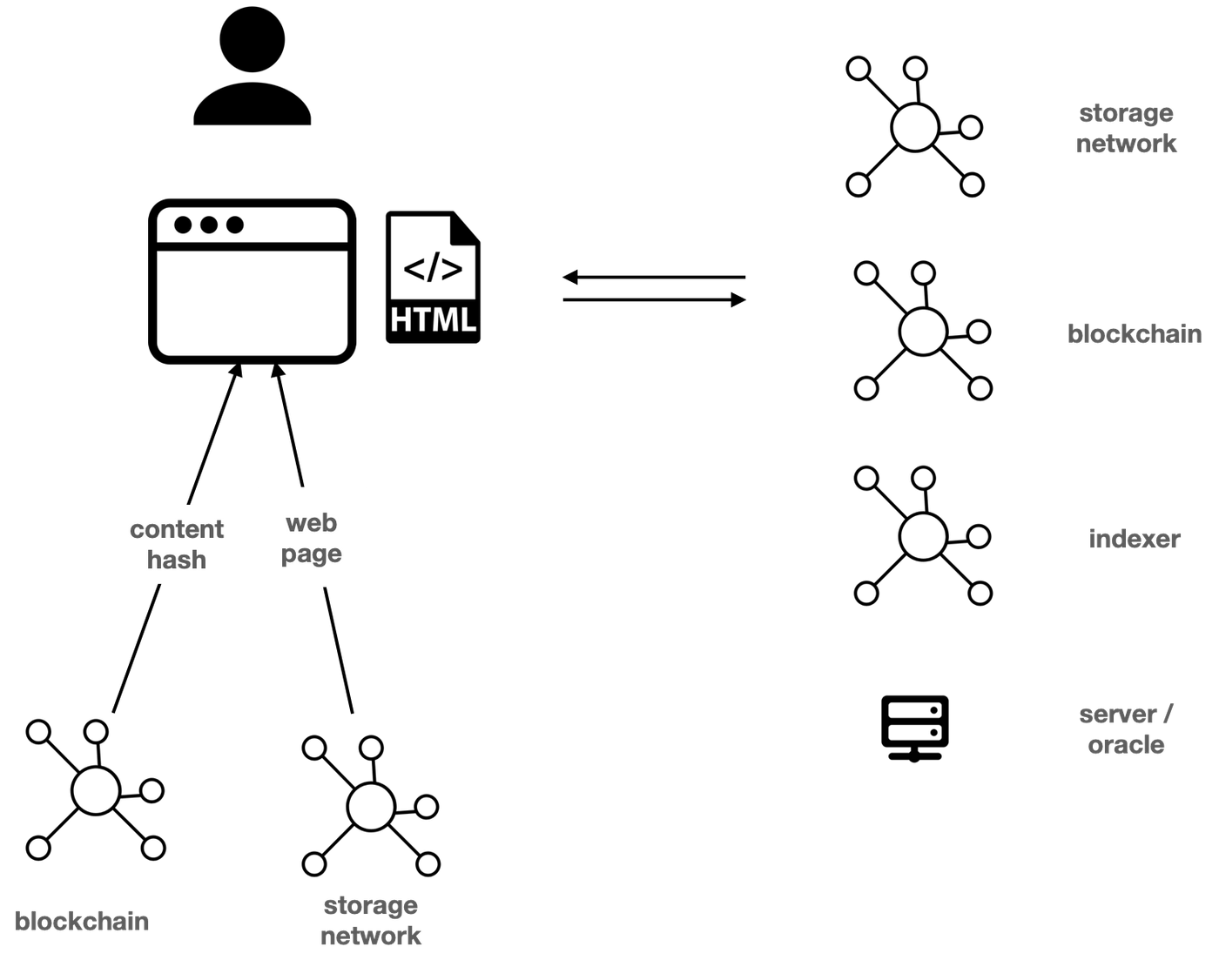

In Web3, this process is still similar. Except that at this time, the browser fetches a content hash from a blockchain-based naming system such as ENS, and fetches the web application by the content hash from a decentralized storage system such as IPFS. Instead of interacting with centralized servers, the web application now needs to interact with a blockchain, decentralized storage, and indexers.

When talking about decentralization, most discussions are referring to the API servers behind the web application. But naming systems are sometimes more important, especially for censorship-resilience, since it is the first service the user needs and is also what is commonly being attacked by methods such as DNS spoofing.

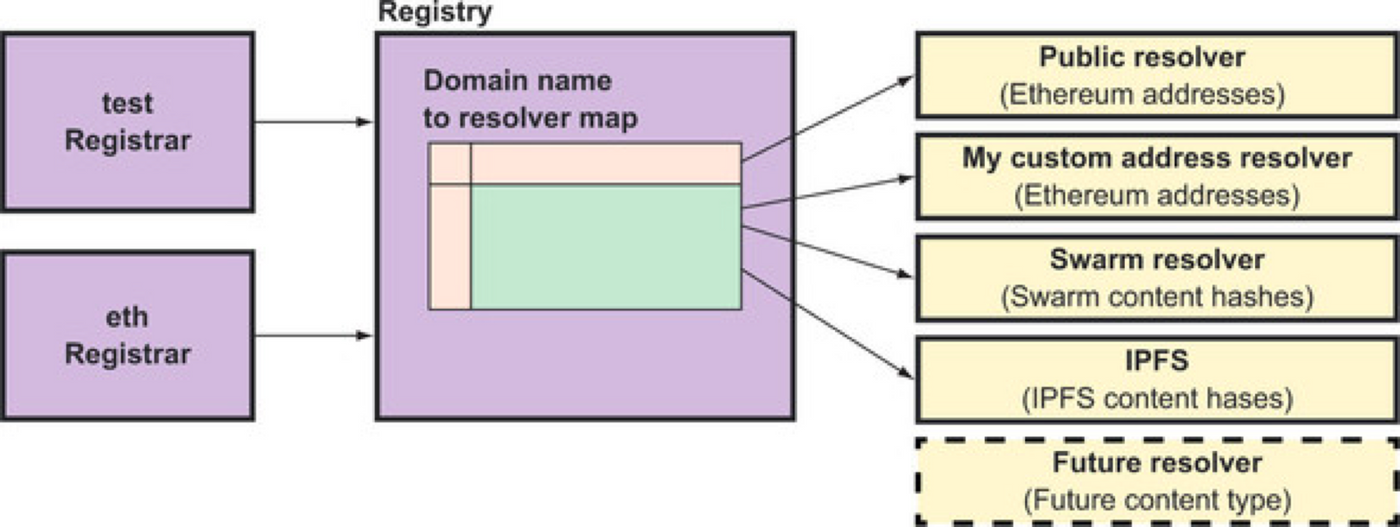

Blockchain is naturally suitable for a decentralized naming system. A naming system is made of mappings between a human-readable name and a piece of data, each controlled by its owner. This requires a global ledger and can be implemented on a blockchain.

One popular example is Ethereum-based ENS. Human-readable names in ENS can be mapped to Ethereum addresses or content hashes, which applications can use. So users can use it as the name of their wallet, and projects can use it to point at the content hash of its web application.

Blockchain such as Ethereum and storage networks such as IPFS are often combined in this way to provide the backbone of decentralized applications. IPFS is good at storing static data, and Ethereum can be used to dynamically point to the newest version on IPFS. Users query ENS for content hashes, then query IPFS for the application corresponding to the content hash.

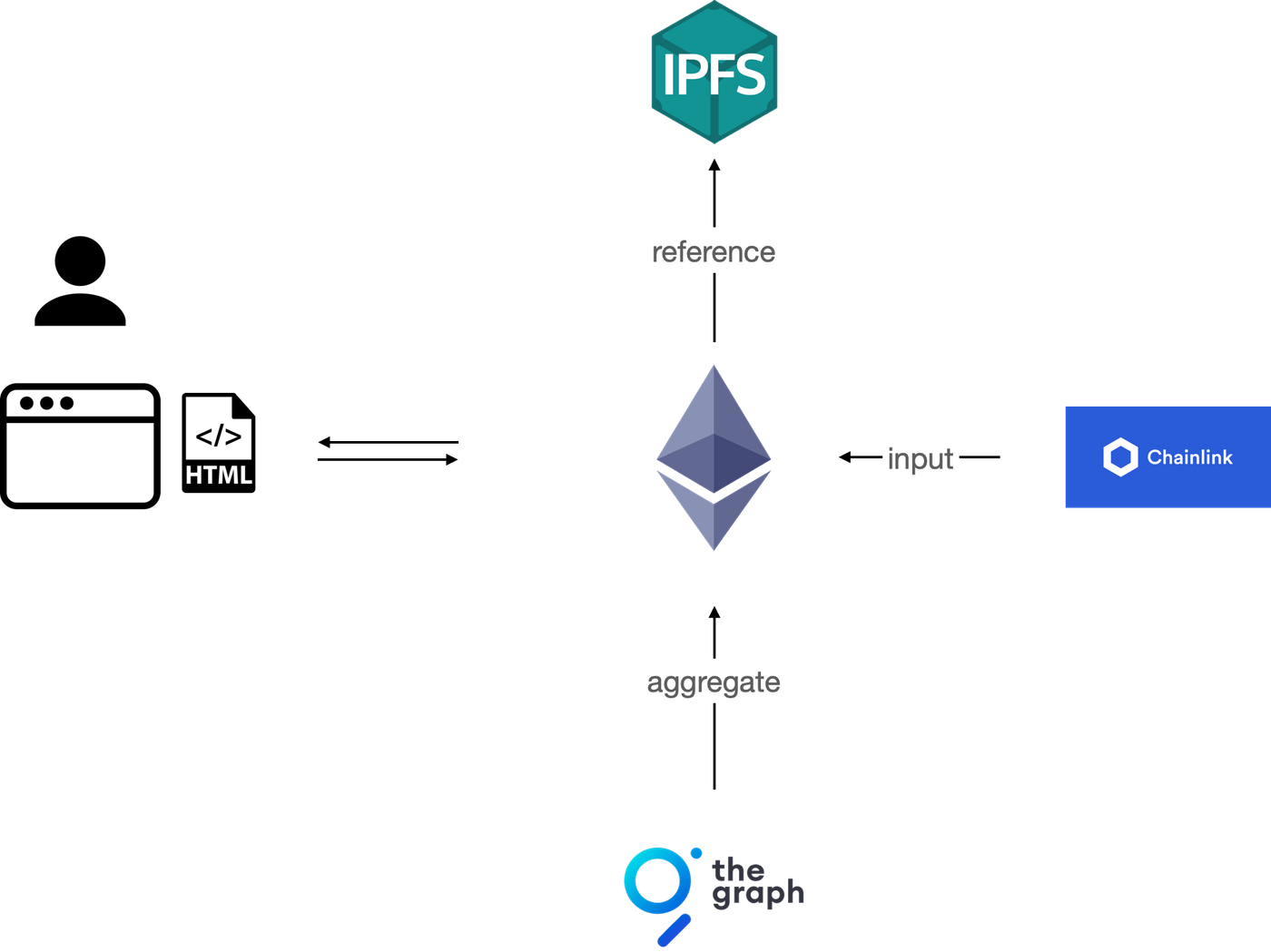

Storage on a blockchain has many limitations in cost and form, so applications sometimes need an indexer to aggregate the data from the blockchain. One popular example is The Graph, which allows developers to aggregate blockchain data into the desired data structure, then query the data with a language called GraphQL. Another example is RSS3, which aggregates on-chain data into a content feed for clients’ retrieval.

Often smart contract needs information about the off-chain world, for example, a contract that buys and sells tokens depends on the stock market. In this case, a trusted server or a cluster of servers is needed to input data into the smart contract. This server or cluster is commonly called “oracle”, and Chainlink is one popular example.

In practice, applications use many more servers than the ones mentioned above. For example, most applications cannot connect to Ethereum or IPFS and verify data in a peer-2-peer way and instead rely on services providers. Such reliances are important since they determine how centralized or censorship-resilience the application is. We will discuss the details in a later section.

Evolving Direction of Decentralized Network

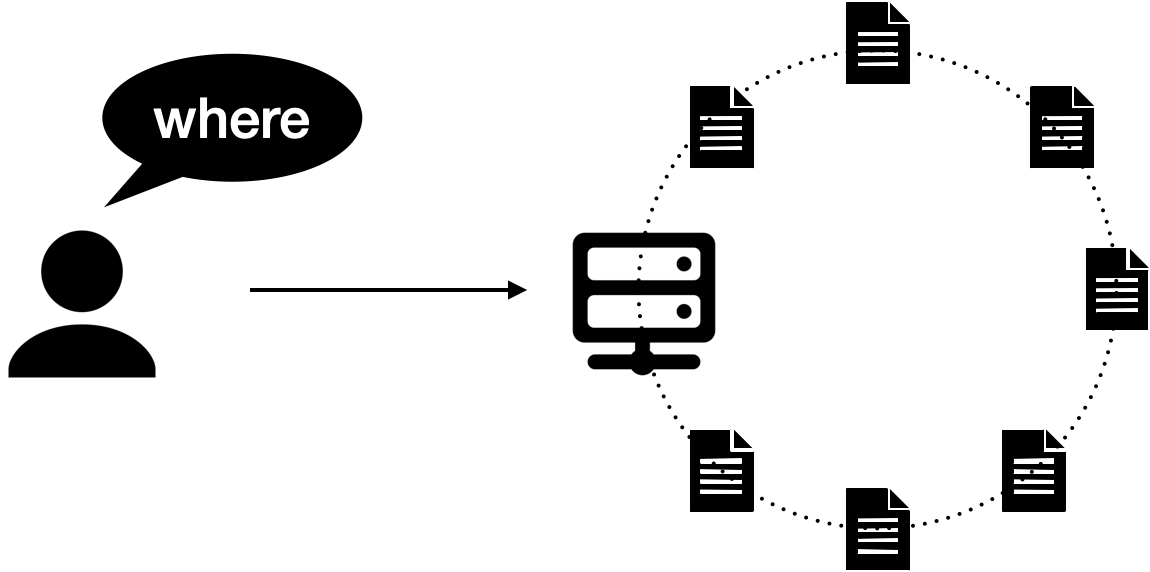

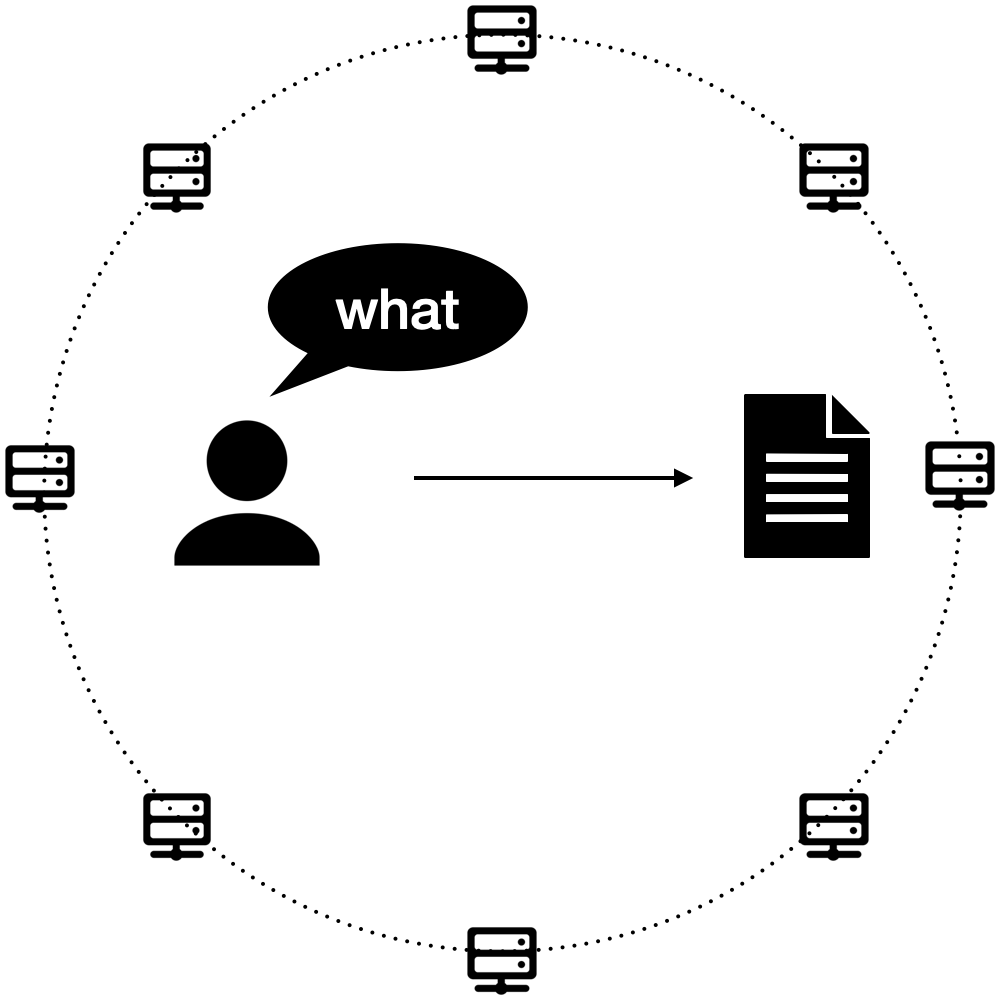

Host-centric vs content-centric

The architecture we are looking at now is very much the result of the past. The Internet is built on a mental model we can call “host-centric”, in which users specify where or who they want to connect with, for example, Google.com. The browser resolves the host address, renders whatever data the host sends back while trusting the host server all the time.

For example, a user connects to Facebook’s server to see her feed, and since she trusts Facebook’s server unconditionally, Facebook can manipulate her feed to increase retention or insert advertisements. A host-centric network is inherently centralized around the host.

The TCP/IP stack that the internet is built on is host-centric. This mental model is largely inherited from the network structure of the telephone line that pre-dates the internet, where users connect to each other through telephones and engage in sessions of conversation.

But as the internet evolves, the majority of use cases are not conversation sessions anymore, but the publishing and retrieval of a named chunk of data, whether it’s an email from a contract, the content feed of a friend, or the video file of a movie.

The host-centric model is very inefficient in these use cases, especially for popular data. For example, when two people sitting in the same room watching the same Youtube video, both of them have to make independent connections to the Youtube server far away, which is a waste of bandwidth.

A more natural mental model for these use cases is content-centric network, also referred to as content-based network, named data network, data-oriented network, or information-centric network. The idea behind these various names is similar: instead of specifying where or whom to connect to, the user specifies what data they want to a network of computers.

In this way, computers can share bandwidth easily, and every single computer is abstracted away and easily replaceable for applications. This makes a content-centric network efficient in distributing popular content, and inherently decentralized.

In 2006, Van Jacobson, one primary contributor to the TCP/IP protocol stack, gave a talk named A New Way to look at Networking at Google Tech Talks, which formalized and articulated this vision. Since then many research groups have been working on this topic, for a more updated design you can refer to Lixia Zhang’s talk NDN: A New Way to Communicate.

At the same time, content-centric network has already been implemented in different forms in different projects. BitTorrent and many commercialized CDNs are examples of it, and Git in a sense is also content-centric. More recently, blockchains and decentralized storage systems are also examples of content-centric networks.

Peer discovery and data verification

In a decentralized network, every single computer is abstracted away. Since the user’s client does not connect to a particular computer or peer, it needs to discover new peers in the network. Upon connecting to the network for the first time, the client now needs a list of peers for bootstrapping, so that after connecting to one or more bootstrap peers, they introduce the client to more peers.

If the client is connecting to random nodes that are untrusted, it needs to verify that the data it receives does match the data it requested. This means that when requesting data, the data needs to be named in a way that can be verified.

One way is to use the content hash of the data as the name. Content hash is the checksum of the data, so upon receiving the data, the client can verify if the hash from the received content matches the requested name. This is suitable for requesting static content and is efficient to cache and distribute. IPFS addresses, Ethereum transaction hashes, and Git commit sha are examples of content hashes.

For dynamic data, since the data can be updated, the client cannot know the content hash in advance. The client can instead verify the identity of the publisher, by verifying cryptographical signature in the data after receiving it. Dat and IPNS are examples of this, and the verification of balance in Bitcoin also relies on the verification of data publishers.

As we can see, to form a decentralized and trustless network, peer discovery and data verification need to be performed locally by the browser and the web app. However, browsers are mature and complicated software that takes a long time to evolve. Although many browser projects are radically experimenting with new protocols, for example, Brave Browser, Unstoppable Browser, and Agregore Browser, most web apps that intend to be decentralized still rely on sever.

Connecting to a decentralized network

Within a decentralized network such as Ethereum or IPFS, nodes connect in a peer-2-peer way. However, client software in most applications does not have the resource to run a full node locally and therefore requires a service provider to connect to the network.

The connection to a decentralized network is thus often the centralized point. Since the application developers need to pay for the service provider constantly, it is also a financial “leaking point” that requires an income stream to sustain the application.

In Ethereum, the cost of storing data is paid to the network in the form of gas fees. The cost of reading data, however, is not. This is also the case for most other blockchains. In IPFS, the network does not include an economic model and the cost of storing data is handled by each node individually, similar to BitTorrent and Dat. Applications then need to use or implement an incentive layer for nodes to provide bandwidth and store data for end-users.

How applications connect to decentralized networks is where many developments of Web3 infrastructure evolve around. It is also indicative of how decentralized and mature Web3 is.

We will use Ethereum and IPFS as examples for the following discussion. The case of Ethereum is typical for blockchain, and the case of IPFS is typical for p2p storage networks.

Connecting to Ethereum

Centralized Service Provider

The most common way to connect to the Ethereum network is via a centralized service provider. This can be commercial service providers such as Alchemy or Infura, or a service provided by the application developer.

This provider provides a standardized service and is easily replaceable, but is still a single point of failure, which can be blocked or tampered with. In addition, the client and the user have to trust the service provider without a method to verify the data received.

Decentralized Service Provider

A service provider can also be decentralized so that instead of connecting to a particular Ethereum node, the client can connect to a network of nodes, among which tokens can be used to incentive bandwidth provision for clients. One example is Pocket Network, which is currently gaining traction among DApp developers.

However, since the client cannot do routing locally, it still needs to rely on a centralized server to route or forward traffic to particular nodes. And because the clients do not have a way to verify the integrity of the data received, the network needs a reputation and staking system to punish bad behaviors.

Indexer networks

Indexer networks are another way to connect to Ethereum, but instead of providing original blockchain data to the client with standard API, indexer nodes provide an aggregated version of blockchain data. This is useful since data structures in blockchains are very limited, and developers can use indexers to aggregate data into suitable shapes.

One example of an indexer network is The Graph, which allows users to query different blockchains in GraphQL, a language for describing data. Developers can submit GraphQL schema and resolvers to an indexer network, which aggregates data according to the schema, then query the indexers with GraphQL queries.

Since a client cannot verify the integrity of the aggregated data, The Graph also needs a reputation system and a staking mechanism, so that good behavior is encouraged and bad behavior is punished.

Light node

As we discussed above, in a truly decentralized network, the client needs to verify data integrity locally and connect to the network in a trustless way. Nodes in Bitcoin or Ethereum work like this, but they need too much storage to be embedded in clients for most applications.

This is why Ethereum light nodes are very important, which are not common yet but are under fast development. Light nodes can verify data integrity without storing all the blockchain data, so that ordinary clients with light nodes can connect to the Ethereum network in a peer-to-peer and trustless way, forming a real decentralized network.

However, since light nodes need to download data from other full nodes, they act like leechers that rely on the bandwidth of others. It is unknown how big this problem is since light nodes are not widely adopted yet. One possible solution is to combine light nodes with an incentives system for bandwidth sharing, similar to Pocket Network so that light nodes can pay for the bandwidth they require but remain decentralized.

Connecting to IPFS

For reading data from IPFS, the most common way is through public gateways, often provided for free by teams voluntarily. For storing data, the most common way is through a centralized service provider, while the provider often charges a fee from the developer. The service provider can be Infura, Pinata, or Matters.

Both cases introduce the risk of single point of failure that makes the system easily blocked and attacked, and rely on a company to continue its operation. They also rely on someone providing resources for free or finding another way to compensate for the cost.

Another way to connect to IPFS is via a network of nodes incentive by a token. Users pay tokens for their data to be stored by nodes, for example with Crust Network, Arweave (via IPFS bridge), or Filecoin. Filecoin also allows users to pay for reading data. Meson Network provides another way to read from IPFS, by routing client software to a nearby IPFS node while incentivizing the node with the token.

However, as long as the client software cannot perform routing and data verification in a p2p way, it needs to trust and rely on a centralized server, whether to make a storage deal or to redirect to an IPFS node, which is again single points of failure.

The only way to have a fully decentralized network is to run an IPFS node directly in the client. Compared to Ethereum full nodes, IPFS nodes require much fewer resources and have been implemented in desktop applications such as Brave Browser, Audius, and OpenBazaar. In this way, users share data directly among themselves, as BitTorrent or eDonkey have successfully operated.

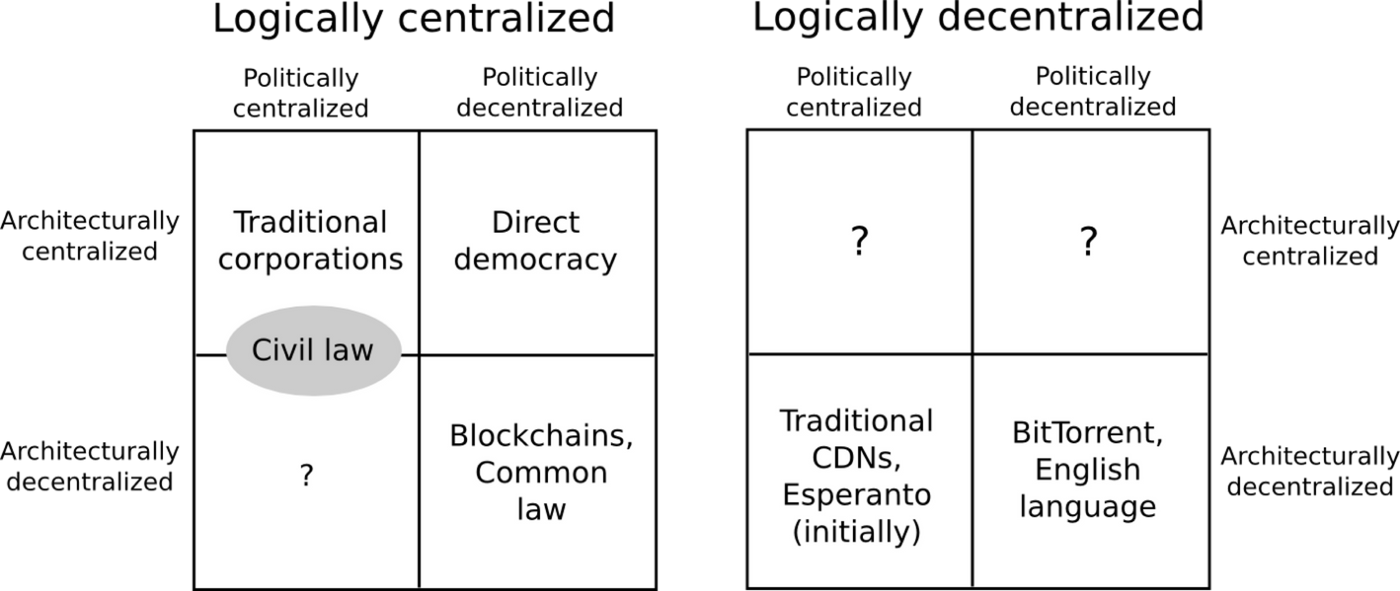

Types of decentralization

The above discussion assumed decentralization as a goal, and it is helpful to differentiate types of decentralizations to understand if they are necessary or practical. Vitalik Buterin proposed a helpful classification of decentralization as logical, architectural, and political.

Whether a system can operate as intended when it is split into halves, reveals how logically decentralized the system is. How many computers and what structure form the network determine architecture decentralization. And how decisions are made by stakeholders is political decentralization.

Logical decentralization is most resilient to censorship and attacks since a part of the network still operates as designed when disconnected from the rest. Holochain and Secure Scuttlebutt are two great examples, and in theory, they are very secure and robust. However, most applications need some form of shared token, which requires a global ledger and is therefore logically centralized.

Architecture decentralization also provides some robustness, since the network does not rely on a single computer. From the above discussion, we can see that it is also important for peer routing and data verification to be on the client-side so that clients do not need to rely on a single server.

However, since peer routing and data verification are computationally expensive, they largely depend on the browser. The standardization and adoption of such mechanisms in browsers can be seen as an indicator of the level of decentralization of web3, or even as the maturity of the web3 tech stack for application developers. Before browser adoption, developers can also use technologies such as WebAssembly to implement routing and verification, if there are enough incentives for decentralization.

Political decentralization is probably a more important form of decentralization, since all application evolves, and it should be the people that use and develop the application who collectively decide the direction. Political decentralization reduces the speed of decision-making, so it is often practical to gradually decentralize decision-making as an application matures.

Political decentralization is beyond the scope of this discussion. As a problem that all communities and societies try to solve, it is beyond the scope of any single discussion. It likely does not have a clear solution but is a topic we all can and should participate in, regardless of our understanding of technologies.

喜欢我的文章吗?

别忘了给点支持与赞赏,让我知道创作的路上有你陪伴。

发布评论…